The Opportunity Trap of the ChatGPT App Store

In the AI world, context is currency.

Uploading daily sleep data screenshots (HRV, resting heart rate, etc.) from my Amazfit Helio Strap to ChatGPT has helped me correlate sleep quality with caffeine, exercise, screen time, and device usage. After some, I managed to extract raw data from the Zepp app (ChatGPT wouldn’t extract data from screenshots), by linking Google’s Health Connect to my Google Drive via Health Sync. Health Connect is replacing Google Fit, but both are still live. That’s Google for you…go figure. I’m still looking for a good Android or desktop app to analyse these CSV files…no luck yet.

The challenges for Apps in ChatGPT

1. The Context Challenge: It would be ideal if ChatGPT could pull in that data directly from HealthConnect, and give me feedback daily, across my historical context. I bring this up because ChatGPT gives actionable insights, as opposed to health apps which take a Google-Analytics approach: they give you data, but don’t explain it.

When OpenAI announced apps for ChatGPT two months ago (I had expected that this would take a year), Sam Altman said that they will be interactive, adaptive and personalised, and users can connect their data, trigger actions, and interact with the app in ChatGPT.

MCPs (explained here) are clunky. Engaging with an app that pulls in data and context from an external app requires effort and patience for now. For example, with the Zerodha MCP in Claude, my connection repeatedly get disrupted, each instance needing a login and 2FA. ChatGPT will eventually enable persistent logins, which were incidentally the primary benefit of apps versus the open web. Claude also exposes the innards of the custom code it writes before delivering a report.

I don’t need to see how a kabab is made from a bakra in order to enjoy it: quite the opposite, in fact.

Claude also struggled with very specific queries so I gave up, unclear whether Claude or Zerodha were at fault.

The challenge for both ChatGPT and the Apps on it lie in figuring out how much context to take and what data to pull. My old blogging friend Ankit Solanki, the co-founder of Cleartax, explains here, among other things, the challenges of importing context from MCP Servers: It is a communication problem - too much is expensive to process, and too little leads to loss of context and a sub-optimal output.

How much to tell someone in response to a query is an issue that humans, not just the socially-awkward ones, grapple with, and it’s fascinating to see AI companies grapple with it too.

Before you read further, do consider supporting my work by making a payment here, if you’re in India, and here you’re not from India.

2. The User Experience (UX) challenge: OpenAI, which is more consumer focused than Google, explains it really well to developers, to try and goad them into avoiding feature-stuffing:

“Users aren’t “opening” your app and starting on the home page. They’re having a conversation about something and the model can decide when to bring an app into that conversation. They’re entering at a point in time. In that world, the best apps look surprisingly small from the outside. They don’t try to recreate the entire product. Instead, they allow users to access a few specific powers while using the app in ChatGPT: the concrete things your product does best that the model can reuse in any conversation.

It tells developers that they don’t need to port every feature, don’t need a full navigation hierarchy, but do need to think clearly about how they serve users, and how that integrates into a chat interface. Instead of asking “Where should the user go next”, typical of apps and how they try and hand-hold user behaviour, it says developers should ask: “What can we help with here?”

Also, “That means the “unit of value” is less your overall experience and more the specific things you can help the model and user accomplish at the right moment.”

It is akin to the “Jobs-to-be-done”, a framework used by product managers.

3. The Control challenge: When apps in ChatGPT exist to serve tasks, the real question is: who decides which app appears, and when?

When will it recommend a MakeMyTrip over Booking.com for hotel bookings? Businesses are no longer in control of the experience of a user within the app: there is commodification of services. They’re also not really in control of recommendations either: To put it simply, the control over the app inside ChatGPT lies with ChatGPT, unless a user specifically asks for the app in the chat. To use a Cricket analogy, it is the selector, the umpire, the ground, the conditions and potential future opposing team, all at the same time. LLMs also carry embedded bias (hello anti-trust) and will eventually need to demonstrate neutrality.

How will ChatGPT decide what to recommend in chat? That decision is stochastic now, with real world implications for businesses. ChatGPT clearly states:

“You’re designing for two audiences:

The human in the chat

The model runtime that decides when and how to call your app”

This is also why, now that Google’s AI Mode is killing traffic for publishers and websites, the focus is now on AEO (AI Engine Optimisation) and metadata embedding even for news media publications. Like SEO, people will game AEO. Algorithms will catch up, AEO will evolve: It’s in that delta that money lies for those gaming the system. Just like SEO, AEO will be a multi-billion dollar business.

As AI commodifies experiences, app-specific AEO will likely be bigger for services than content. ChatGPT’s guidelines clearly state yours might be “one of several tools the model may orchestrate”.

ChatGPT apps are tools, not destinations.

4. The Privacy Challenge: ChatGPT is worried about exposing the context window, especially since chats contain data inferred from past conversations. So how much will an app get to know about a user? As per the developer guidelines:

“Tools should request the minimum information necessary to complete their task.

Input fields must be directly related to the tool’s stated purpose.

Do not request the full conversation history, raw chat transcripts, or broad contextual fields “just in case.” A tool may request a brief, task-specific user intent field only when it meaningfully improves execution and does not expand data collection beyond what is reasonably necessary to respond to the user’s request and for the purposes described in your privacy policy.

If needed, rely on the coarse geo location shared by the system. Do not request precise user location data (e.g. GPS coordinates or addresses).”

This suggests apps can request full raw conversation history. A friend in Big Tech once told me, the old approach was: collect everything first, decide what to do with it later. In this case, it appears that, technically, ChatGPT may not control how much context apps can request.

That is worrying.

The opportunity for apps in ChatGPT

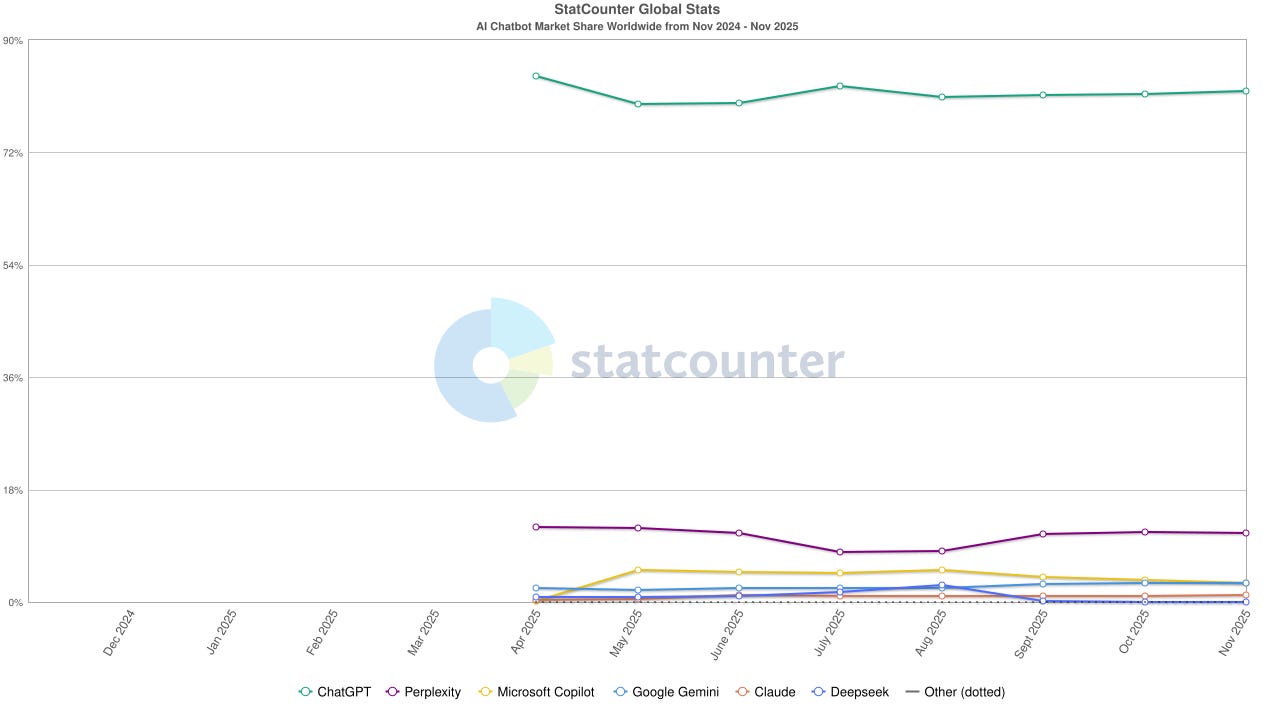

Also, in the Google Search vs ChatGPT battle, AI reduces the need to search, and the cognitive load that goes with it. Over time, our chat context that expose more patterns and hence results will get more personalised. Google can see this coming, which is why it is gradually killing search with AI Mode. It’s the classic innovators dilemma situation: in order to survive, Google has to kill its golden goose before AI does.

To give you a historical Nokia analogy, Search is its burning platform.

And ChatGPT is the one that’s burning the platform. Apps will eventually have to jump to ChatGPT. However, there is opportunity: AI offers developers something that search cannot: context.

In the AI world, context is currency.

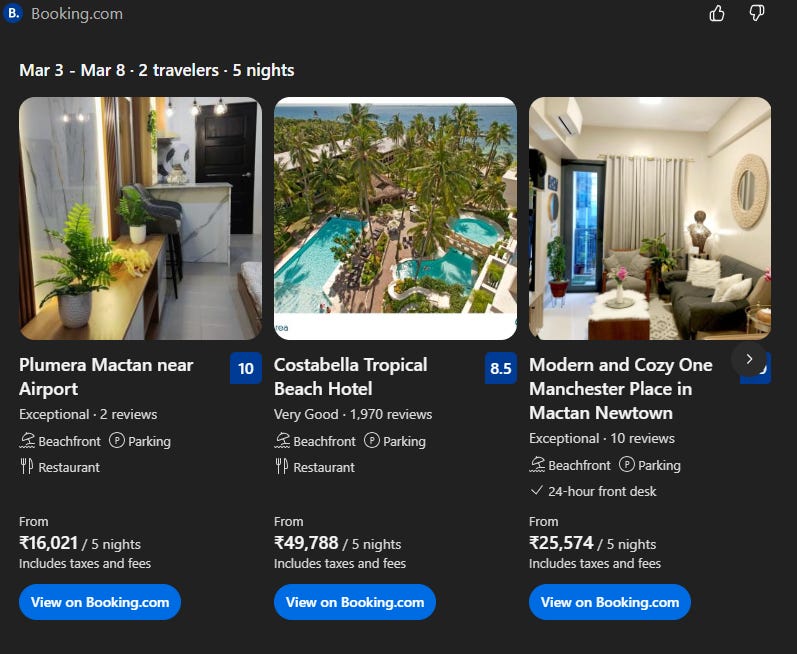

Services can get more context about users (if ChatGPT allows it), and offer greater personalisation and integration with other services. Today you need an AI agent to chain multiple tasks together in order to complete a project. For a holiday, I might use MakeMyTrip for flights, Booking.com for booking a hotel or an apartment, Tripadvisor for reviews for sightseeing, and Viator for booking attractions. Planning an itinerary in ChatGPT would allows me to complete all tasks in one place, with instant checkout. Since Booking currently works without a login within ChatGPT — A login is only required once you exit ChatGPT to complete the transaction on Booking.com — its context is probably currently limited to a session; this will need to change.

Another opportunity for developers lies in serendipitous recommendations: if your jobs to be done are clear, and AEO is sorted, ChatGPT may recommend you in unexpected use cases. For some apps, the GPT store, as clunky and kinda full of badly architected use cases as it was, it has been a great way to drive discovery, as it has been for Sanket Shah’s Invideo, which is among the top video apps in the GPT store. The new App integration allows for Invideo to be recommended for a video ad after someone has create a banner ad using Canva.

My experience: The ChatGPT app integration didn’t work well for me. Firstly, Chatgpt suffers from written diarrhea - too much noise compared to the native Booking.com app. Secondly, the recommendation engine isn’t functional: despite the Booking and Tripadvisor apps being available in the store, ChatGPT didn’t prompt a connection with either, and served up web links. Even after I manually connected Booking.con, ChatGPT prioritized generic web results over context from Booking. The Booking.com carousel was marginally useful: dates matched, but key filters like “beachfront” were ignored, and the options were too few to matter. Look at the disconnect between the recommendations and the carousel.

To be fair, it’s early days in the arranged marriage between apps and ChatGPT: ChatGPT and Booking are still learning to understand each other.

The real opportunity lies is data and context being passed between apps via ChatGPT, and reducing repetition in specifying data by chaining multiple apps together for fulfilling a project, like planning a holiday.

This post is a follow up to, and draws from:

The Trap

1. Loss of control: Developers lose control over when their app is triggered. While fine as an add-on, it will become a concern once ChatGPT becomes a primary access point to a service. Chatgpt inadvertently warns developers about this:

“In a real ChatGPT session, your app is rarely the only one in play. The model might call on multiple apps in the same conversation.”

Users don’t typically switch apps once they’ve invested time and effort in setting it up. They’re unlikely switch, and they avoid relearning an alternate app. That’s why, sometimes, competing apps are similar. Some of that friction may be lost with a ChatGPT led interface, making apps more fungible. Apps may also lose the user’s exclusive attention: within ChatGPT, “From the user’s perspective, it’s one flow. From your perspective, it’s a reminder that you’re part of an ecosystem, not a sealed product.”

Most people have a single app for a single function: the power of the default. ChatGPT will have its own defaults, and user acquisition won’t be in your control. With functionality narrowly focused on jobs to be done, branding may not be in your control either.

For now, users must leave ChatGPT to edit something like a Canva presentation. With time, more functions will be possible within ChatGPT.

2. Loss of context: ChatGPT severely restricts data collection by developers in policy even though it’s not clear if it can do this technically.

Its developer guidelines explicitly discourage broad data collection: apps should only gather task-specific inputs and avoid “just in case” context or entire chat histories. They must not reconstruct conversations, infer user profiles, or collect metadata unless clearly disclosed and essential. While these safeguards are well-intentioned, they disadvantage developers.

In a typical app login, this kind of context is standard. OpenAI highlights the contrast by reminding developers that in case of native apps, ‘Most product decisions flow from that assumption: “We own the screen.” You can invest heavily in layout, onboarding, and information architecture because users are committing to your space.’

If you invert this, they’re saying to developers that the space inside ChatGPT isn’t theirs. Only ChatGPT can say “We own the screen” inside a chat.

If the roles were reversed, with ChatGPT integrated into apps via its API, developers would likely retain more context, control and visibility. In the App integration construct, they have less context (to keep), and more restrictions, putting them at a structural disadvantage against ChatGPT.

3. A restricted environment: Apps are restricted from enabling discovery of other services within ChatGPT. They cannot “insert unrelated content, attempt to redirect the interaction”. In addition, “apps must not serve advertisements and must not exist primarily as an advertising vehicle.” Among other things, importantly, “Currently, apps may conduct commerce only for physical goods. Selling digital products or services—including subscriptions, digital content, tokens, or credits—is not allowed, whether offered directly or indirectly (for example, through freemium upsells).

OpenAI makes it clear that “Every app is expected to deliver clear, legitimate functionality that provides standalone value to users”, and nothing more.

In some way, it appears that if you have a ChatGPT App, you’re not a service provider for the customer: you’re a service provider for ChatGPT. At the same time, as usage shifts to AI for answers, solutions and services, developers will have no choice.

As I’ve written previously, platforms are in the business of increasing fragmentation and monetizing aggregation, such that no single entity (except the platform) has enough negotiating power. What we’re seeing here is the platformisation of chat apps.

That’s why it’s an Opportunity Trap. You build for the platform because you see opportunity. More utility brings more users to the platform. The platform uses you to build itself, and entraps you.

If you’ve read this far, and are a subscriber, do share this with someone who might want to read. Also, if you disagree with something, notice an inaccuracy, or have something to add to this, please do send me a message or leave a comment. The next piece will be on AI and copyright, and I have lots of relevant comments to add to that already. Two themes currently running on Reasoned: AI and Copyright, and AI and the rewiring of the Internet. You can tell them apart from their featured images.

Hi Nikhil. From what I understand, I see two scenarios here.

One, where ChatGPT becomes a super platform, where I make up my mind to use it entirely for a particular context (for example, travel planning). In that case, I'll rely on it for planning itineraries, to discovery and selection of services, to checkout. Notwithstanding the current issues you have pointed out, this could be a seamless, single session, end-to-end experience.

Two, Websites like Booking and Viator progressively embed ChatGPT, improve their AI-powered functionalities, to enable more precise discoveries, and fulfil user preferences.

My questions here-

1. Given the drawbacks you have pointed out in this article, wouldn't it make more sense for consumer facing companies to improve their apps, which allows them to retain traffic, invest in marketing, improve discovery, and operate with certainty (in contrast to a super app that does not promise the recall of a specific service like Booking.com unless explicitly specified by a user)?

2. I feel a lot of consumer facing apps, from hospitality, to shopping, enjoy a degree of lock in and network effects that AI super apps might find difficult to achieve in the near to medium term future. Plus, as an individual app user, I feel biased to not operate a super platform, both because of lock in, and trust. What I mean by trust, is that I trust the app more in terms of functionalities like reviews, suggestions, photos, deals, and what not. Do you see that as an inherent problem in a super platform like ChatGPT?

3. What is the incentive for consumer facing product companies to build for a super platform like ChatGPT, where the entire sense of a 'product' can become amorphous? I would like to think that retaining their distinct individual identity, a fully functioning transaction space, a higher transaction and earnings probability, would be decisive factors. Additionally, this also incentivizes auxiliary services like payment operators / gateways (who charge a percentage of each transaction from platforms) to support individual platforms, rather than a super platform.

4. Lastly, I think a super platform puts a lot of the burden of discovery (clear articulation of preferences, constraints, ideas, words combined into a single or multiple prompts) on the user, while a sensibly made AI-powered app would more accurately taken on some of this burden from the user.

Would appreciate your thoughts on these!