What Meta’s Manus acquisition tells us about the future of agents

This is not an AI model race. It’s an execution-layer race

Happy new year, everyone. This is going to be an exciting year, if you’re obsessed with AI, and I’m breaking my two posts-per-week rule because I can’t stop thinking about the Manus and Meta deal.

If you know how AI Agents work, then you can skip straight to the “Why Meta acquired Manus” section below.

How AI Agents work

The thing you need to know about AI agents is that for the most part, they’re more hype than execution today, but they signal the onset of a significant change in how we work.

At present, what Agents do is chain a sequence of actions across multiple tools together in a manner, with autonomous decision-making thrown in a mix.

Let’s first look at sequencing actions: The earliest manifestation of combining multiple tools together that I used in (probably) 2010, was a service called IFTTT, which was an abbreviation of IF This (happens) Then (do) That. You could connect your Android phone with Google sheets such that every time you got a call, the details would automatically be logged in a sheet, or a photo you took would be automatically saved in your Dropbox. Zapier took this interconnection and chaining of actions services to another level.

These workflow tools then integrated LLM’s, which brought to them language, understanding, decision making and memory. I tried connecting an RSS feed or a Google form to push an article in English to a ChatGPT, and translate it into Hindi, and save (or publish) the article in Wordpress. Now this is a simple series of actions with limited autonomy, but almost no decision making.

At the SuperAI conference in Singapore last year, I saw a fascinating presentation by a social media influencer about how he has completely automated his social media, to share updates on hot topics, at the right time, in a manner that he wants, without even interfacing with him. With time, and the advent of n8n in particular, the tasks being executed are increasingly becoming more complex. These are chained workflows with LLMs in the middle, and not agents. LLM’s have allowed these workflows to incorporate thinking, reasoning and language operations. More on that here.

Understanding autonomy: Even as early as a year and half ago, you could ask browse.new, a browser agent, to find, like I did, court cases filed against Microsoft in the Delhi High Court: it would open a browser window, type in a search, identify a link, go there, find the search box, type in a query, pull results, assess them, filter out irrelevant information,and give me the results. Except, it took ages to do this, because each of these actions runs multiple processes in the background and require compute. We now have agentic browsers like Perplexity’s Comet that can do similar tasks.

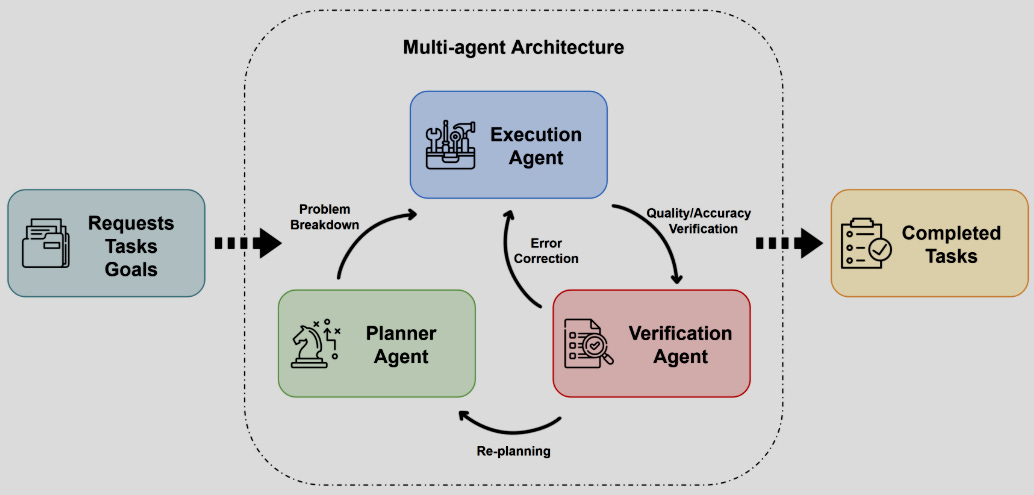

Think about how a fully autonomous agent would need to work:

Understand what needs to to be done

Figuring out how to do it, and identifying actions and specific steps

Find tools that can execute each of these actions, and can communicate with each other

Chain the tools together in the correct sequence

Execute the output/action and assess the output

Share the output with the user if required

For example, at some point in time in the future, if you and I wanted to meet for lunch, our agents could figure out the time of day that works of both of us, based on our dietary preferences from our shopping apps, restaurant and food ordering history, our agents could book a table, block our calendars, and book an Uber for each of us if needed, from our expected location, at the right time of departure taking into account traffic conditions and wait time at the restaurant. For all you know, it could even order food in advance so that we arrive just in time to eat. If you break this down into “jobs to be done” and “decisions to be made”, it is complex but it is doable.

Challenges with autonomy: At this point in time, each action takes substantially more time: Travelonarrival founder Ankit Sawant illustrated this in a tweet yesterday, saying:

Applied for Thailand Digital Arrival Card for 5 ppl entirely via ChatGPT agent mode

At the final step looked at the preview, verified, & submitted. Agent Mode took around an hour with 16 credits, I could have done it in 15 mins But the freedom of not worrying about the actually process was priceless PS: I gave dummy passport nos & in the final submission edited to add the real ones

Dependencies in autonomous automation: Now lets look at the dependencies for full automation:

Access to historical context and a large context window

Integration of multiple services together

Integration of LLMs for decision making

Degrees of freedom

The communication gap: the challenge of coordination across tools, and from agent to agent.

Processing power at each stage

Cost of execution

Time

Trust

Cost of Failure and the Risk of error propagation

Depending on your task, the degrees of freedom will also vary, and that will impact complexity. IFTTT had zero degrees of freedom. Connect an AI agent to a humanoid, and you add physical degrees of freedom.

Reasoned is where I track what AI changes for people who build and create online. Subscribe here.

Trust and the cost-of-failure and risk of error propagation are also key: I’m more likely to automate social media updates than use agents to book flight tickets. I’m also more likely to automate an anonymous account, than one with 200k followers.

This is also why self driving cars are inordinately complex because they combine substantial degrees of freedom with high cost of failure, and hence the decision making pressure, the need for greater certainty in outcomes.

As an aside: think about liability that agents can create. Customer support is the most common use-case for AI agents: human interactions with customers via call centers are costly and time-bound, while agents work around the clock.

The autonomous nature of these interactions means that chatbots can make mistakes, be gamed and prompt-hacked, which brings in questions of liability. Air Canada’s AI chatbot famously gave a customer a discount that the company was forced to honour. Who should pay when many Alexa devices in a town ordered doll houses after an Anchor on news show said “Alexa buy me a doll house”? People have prompt-hacked AI chatbots to use them for general purpose AI chat, or even transfer the chat to a human (because AI chatbots can be too templated to engage with).

Why Meta Acquired Manus

The idea behind Manus fits rather well, not with Meta’s consumer side of things, but within its Business AI construct.

Business AI is Meta’s AI-powered sales and support concierge that sits across Meta’s ad, messaging, and web surfaces, designed to increase conversion, and reduce missed sales opportunities. It is focused on enabling conversations and commerce on Meta platforms, and Acts as a sales assistant inside Ads, Messenger, WhatsApp and Websites. As per its website, the goal for Business AI within Meta is to “Deliver tailored product recommendations for every shopper based on their unique needs and preferences”, and as well customer support, and data about advertising performance.

Meta’s Business AI brings context from Meta and the business (ad campaigns, product catalogs, and website) into the conversation, across messaging apps. With time, as historical interactions get stored, Business AI should ideally (no guarantees) end up responding with more context, and solve consumer problems for the business better, thus improving conversations.

In the last Meta earnings conference call, founder Mark Zuckerberg highlighted what Business AI means for the company:

“So the ability to reason more intelligently is, for example, very important across a large number of things. It would be useful for an assistant. It will also be useful in business AI. It will also be useful in the AI agent that we’re building to help advertisers figure out what their campaigns are going to be.”

…

“I mean, certainly the capability to be able to produce very high quality good video is going to be useful for giving people new creative tools”…”It should help advertisers be able to create creative that will help us monetize better.”

…

“Every day, people have more than 1 billion active threads with business accounts across our messaging platforms -- ranging from product questions to customer support. Our Business AIs will enable tens of millions of businesses to scale these conversations and improve their sales at low cost. The better our models get, the better this is going to work for all businesses.”

Meta CFO Susan Li added:

“We’re also making good progress on our Business AI efforts, where we’ve been focused on building a turnkey AI that helps businesses generate leads and drive sales. We’ve been opening access in recent months to more businesses within our initial test markets, the Philippines and Mexico, and have seen strong usage, with millions of conversations between people and Business AIs taking place since July. This month, we expanded availability within WhatsApp and Messenger to all eligible businesses in Mexico and the Philippines, respectively. In the US, we’re also starting to roll out the ability for merchants to add their Business AIs to their website so we can support the full sale funnel from ad to purchase.”

Now how does Manus fit into this? Manus doesn’t replace Business AI but enhances it.

Tens of millions of SMBs and enterprises already using Meta platforms for Messaging-based commerce, customer support and lead generation. Business AI generates content, optimises ads, and handles conversations and chats. If you zoom out: it is focused on funnel optimisation, in order to reduce wastage in spends.

Advertising is becoming an adaptive system, not a campaign. Agents shift value from insight generation to outcome control.

Manus has demonstrable context-aware decision making, and because it was trained “on multi-modal data (text, images and code) and multi-task learning”, it can work across modes.

From this paper on Manus):

One distinguishing aspect of Manus AI is its context-aware decision making. Rather than executing single-step commands, Manus maintains an internal memory of context and intermediate results as it works through a problem. This means it can take into account the evolving state of a task and user-specific preferences when deciding the next action. The underlying models use sequence-to-sequence predictions to determine the most logical next step, and they update an internal plan as new information is obtained.

What does this mean? That “if a user asks Manus to ‘analyze sales data and suggest strategies,’ Manus will not only compute trends but also decide what types of analyses and visualizations are relevant, and then proceed to generate actionable insights, much as a human analyst might.” The ability to work across data types, call tools when needed, code when needed, evolve its decisions and actions based on intermediate data, means that Manus potentially can do much more than an LLM or a chained sequence of tools can.

(Source)

If LLMs are analysts, agents are managers. They don’t just answer questions: they decide priorities, delegate tasks, and revise plans based on results. Platforms win agent adoption when agents reduce decision burden, not just execution cost.

From Manus’ July 2025 post, just 3 months after it launched:

Teachers have built interactive lesson plans with custom graphics. Small business owners have automated their entire content creation pipelines. Researchers have synthesized literature across multiple languages and disciplines. Students have crafted portfolio websites that showcase their work in extraordinary ways.

The paper I’ve linked to earlier points towards where Manus can be used in entertainment:

Personalisation of storytelling: “Imagine an interactive storytelling app [28] where Manus is the storyteller: it takes a user’s inputs (preferred genre, characters they like) and spins up a personalized short story or even a short animated movie by controlling generative models for images and voices. As the user reacts or provides feedback, Manus adjusts the narrative, essentially improvising a film or game tailored to one person.”

Implementing feedback loops: “An agent that autonomously sifts through audience comments or critiques and then suggests concrete improvements for a show would be extremely valuable. Some studios are already using AI to provide data-driven predictions on how unusual story elements will land with viewers [29]. An AI like Manus could take those predictions and directly implement changes in the script or edit, creating a more efficient feedback loop.”

Now take this same thinking to advertising, e-commerce and customer support, and Manus can potentially help businesses optimise sales and product development end-to-end, with minimal human involvement.

I used AI for replicating this assessment for advertising:

Personalisation of Ads: Imagine an Instagram ad that adapts to each shopper: it takes a user’s signals (what they tap, watch, ask, or comment) and dynamically assembles a personalised product story. As the user reacts or provides feedback, the ad adjusts its visuals, messaging, and recommendations—improvising a sales narrative tailored to one person.

Implementing feedback loops: An agent that autonomously analyses ad comments, DMs, and drop-offs and then implements concrete changes to creative would be extremely valuable. Instead of surfacing insights for later optimisation, an AI like Manus could directly update ad narratives, product emphasis, and responses in-flight, creating a faster, self-correcting feedback loop.

Therefore, instead of fixed scripts (campaigns), ads become live performances that adapt to audience reactions scene by scene.

You can copypaste this into a chatbot and give it other scenarios. The use cases go beyond this, with presentations, email and social media management, and design.

Will Manus be useful for Meta’s wearables?

The Ray Ban Meta glasses are always on, context aware, and proactive (thankfully not always recording). It’s too early, but Manus could become an “action layer” for wearables, where it does real-world task delegation for someone wearing the glasses (like “book a table for me”, or “call me an Uber” etc), and expands the scope of the AI assistant to task delegation. But this is potential. Manus hasn’t done that yet.

Manus is still closer to a powerful prototype than a mature enterprise system. Meta gets a production-ready agentic system to implement, and buys them speed, which is something that Mark Zuckerberg prioritises in highly competitive situations, like AI is.

This acquisition speeds up Manus’ development because it gets Meta’s scale of content, context and social interactions to learn from, across billions of users on Meta platforms, and this means that the pace at which mass-market automation will happen will probably increase, and be impeded by largely three things: AI-human trust gap, execution time days and cost-of failure impacted by larger degrees of freedom (repeating this, if you’ve skipped the part the initial section).

Meta can make Manus enterprise ready.

Reasoned is an ongoing attempt to make sense of how AI is rewiring the Internet.

Each piece focuses on a specific issue, but the larger goal is to understand how these changes accumulate, and what they mean for people whose work and lives depend on the Internet.

Why Meta moved now

Manus, and much of the Agents industry faces the on-boarding and trust challenge. In July 2025, Manus said:

Despite our capabilities, we discovered that many users didn’t know what type of complex task they should assign to Manus. The paradox of infinite possibility created unexpected friction. When faced with a tool that can do anything, users can struggle to decide what to ask first.

Early implementation was also costly and slow. The computational resources required for truly autonomous task completion meant longer wait times and higher costs than users expected, creating barriers to regular usage and experimentation.

A couple of weeks ago, Manus said they were doing $100 million of Annual Recurring Revenue (essentially, monthly subscription revenue annualised), and a revenue run rate of $125 million, which is significant for a company still in its first year. A valuation of $2 Billion is 20 times ARR.

These are obviously very early days and makes me wonder why Manus sold this early. Were they afraid that their early advantage could fade with time, given competition from better capitalised competitors? We don’t know the internal pressures that shaped this decision.

Clearly, Meta saw enough in Manus to swoop in and buy it. What works for Meta especially is that while ChatGPT might be further ahead in their AI journey and adoption, they’re still trying to build advertising and commerce integrations. Claude and OpenAI are already growing tool orchestration, while Meta had nothing. Meta’s acquisition of Manus fills an important gap, but also significantly benefits from its already robust business stack.

MIT’s AI in Business report from August 2025 highlights, strangely, that that time is running out for tool adoption among enterprises:

The window for crossing the GenAI Divide is rapidly closing. Enterprises are locking in learning-capable tools. Agentic AI and memory frameworks (like NANDA and MCP) will define which vendors help organizations cross the divide versus remain trapped on the wrong side.

“In the next few quarters, several enterprises will lock in vendor relationships that will be nearly impossible to unwind. This 18-month horizon reflects consensus from seventeen procurement and IT sourcing leaders we interviewed, supported by analysis of public procurement disclosures showing enterprise RFP-to-implementation cycles ranging from two to eighteen months. Organizations investing in AI systems that learn from their data, workflows, and feedback are creating switching costs that compound monthly.”

This is not an AI model race: it’s an execution-layer race. I think enterprises will still dither over these decisions: Agentic systems can create lock-in through memory accumulation, not just feature breadth, and it’s likely that enterprises will fear choosing the wrong vendor. Meta will probably not play this game, and instead look to integrate with enterprise AI tools.

What we don’t know about this deal

1. Whether Manus becomes integrated into Business AI and loses its identity or remains a product that Meta adds on. This is B2B, not consumer, like Instagram and Whatsapp were.

2. How Manus will handle autonomy at Meta scale, with the degrees of freedom and the error propagation risk, and how Manus will handle pre-outcome verifications at Meta scale.

3. How this impacts privacy in Meta: Memory is a moat (so far). The State of AI in Business report points out:

Unlike current systems that require full context each time, agentic systems maintain persistent memory, learn from interactions, and can autonomously orchestrate complex workflows.”

Essentially effective autonomous agentic orchestration requires memory across functions, and it learns from interactions. It’s not clear how data separation and consent will play into this.

4. Whether Manus becomes verticalised (Customer purchase lifecycle management and wearables), or remains broad purposed (finance, health, research etc) and parts of it are integrated into Business AI.

To this point, Manus had highlighted a roadmap in July 2025:

First, information gathering is a universal need for knowledge workers. We’re developing Scheduled Tasks for recurring research, Wide Research capabilities that span diverse sources, Omni Search that understands intent and context, and access to more specialized domain data sources.

Second, people deal with different file formats every day in their work. We’re expanding our capabilities for Slides with advanced templates and interactivity, Image & Video & Audio generation for diverse creative needs, and Report creation that automates documentation while maintaining quality and consistency.

Third, people navigate between multiple apps all the time. We’re integrating into more daily working apps to streamline workflows, including Email management and composition, Calendar preparation and follow-up, Drive organization and File management, and Task management coordination across projects and teams.

Let’s see what becomes of it over the next few years.

Lastly

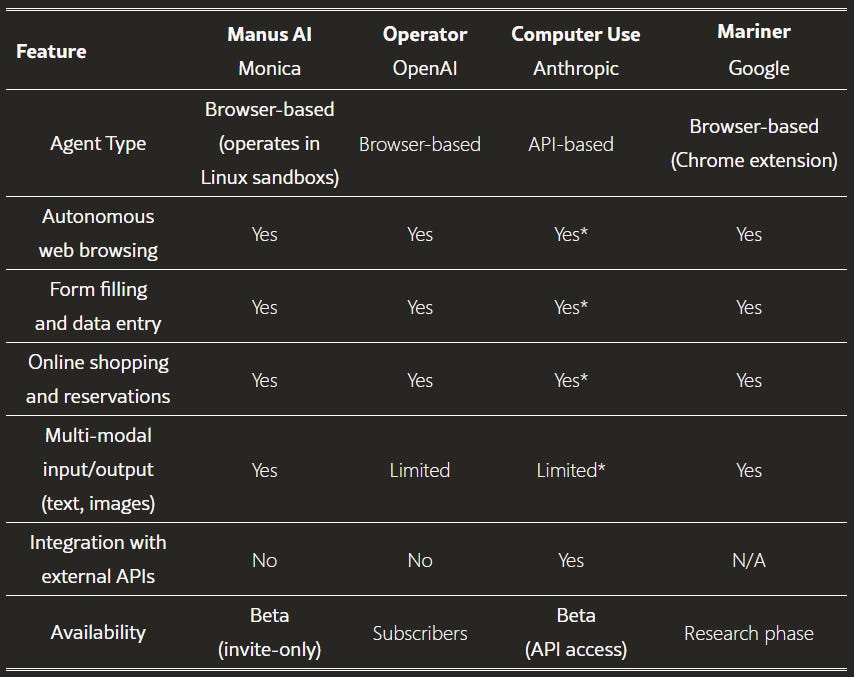

If you’re wondering why none of the others went for Manus: Google has Project Mariner and has set up its own Agent2Agent (A2A) protocol. OpenAI has Operator. Meta had nothing. Manus is more of an orchestration layer (uses Alibaba’s Qwen and Anthropic’s Claude) than a foundational model. These dependencies will have to be worked out by Meta.

We’re just at the beginning of deployment of autonomous agents, but it’s clear from the impact that LLMs had on content writing, graphic design and coding, that agents will impact actions. The immediate early-stage impact of agents has been on customer support, but expect other domains like Media Buying and vendor negotiations to be impacted as well.

In May, on a flight, I wrote an AI replacement theory on the flight back from Singapore:

AI replaces creators (writers, graphic designers, coders)

AI replaces digital task managers with agents

AI+Robotics replaces physical task managers (drivers, delivery workers etc).

AI+Robotics+3D Printing replaces creators in the physical world: Construction, production etc

I don’t know how far this is going to be true, and whether the replacement will be absolute. So far, it’s moving in this direction, and I will be keeping an eye on how agents evolve.

*

Do also read: The Future of Humanoid Robots

Notes:

If this email landed in your Promotions tab, moving it to Primary helps.

Reasoned now has an index page, where all my writing on AI is aggregated in a structured manner.

This is a major shift. Manus was really good, with Meta, I hope they don't lose their identity and the user friendly response